Accelerate JFrog Artifactory with Varnish Orca

JFrog Artifactory is a Universal Repository Manager that stores and delivers artifacts as part of the software supply chain. Artifactory provides private registries with centralized management and access controls for a large variety of artifact types.

Although Artifactory provides significant value to organizations, it also comes with distinct performance and scalability challenges. This tutorial explains how Varnish Orca tackles these issues, and offers improved performance at massive scale.

How to access registries in Artifactory?

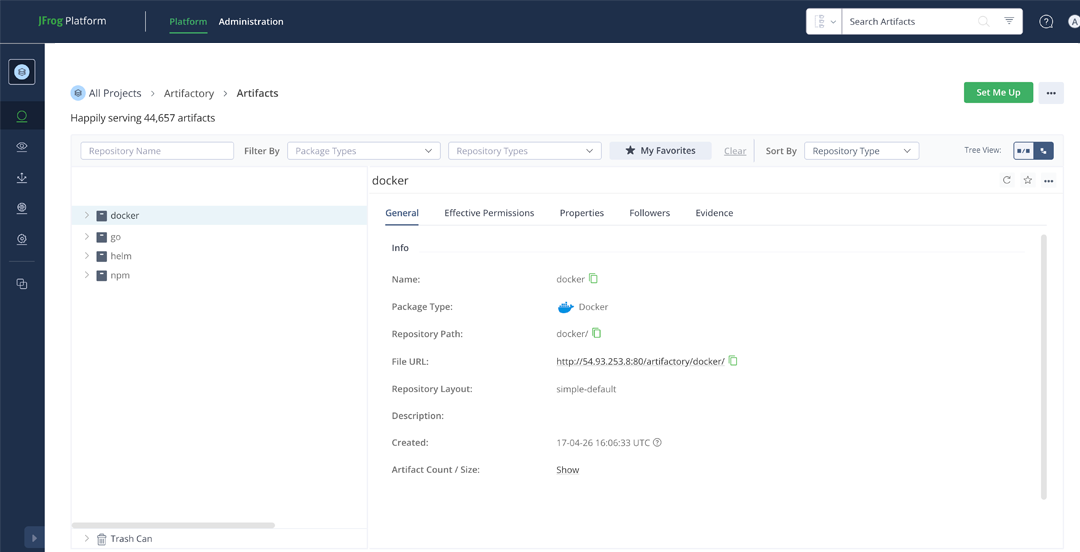

As you can see in the image below, you can create different registries that match the artifact types you want to host on Artifactory:

The artifacts that are hosted on Artifactory can be fetched using the native client of that artifact.

This could be a docker pull, an npm install, a helm install, a go get.

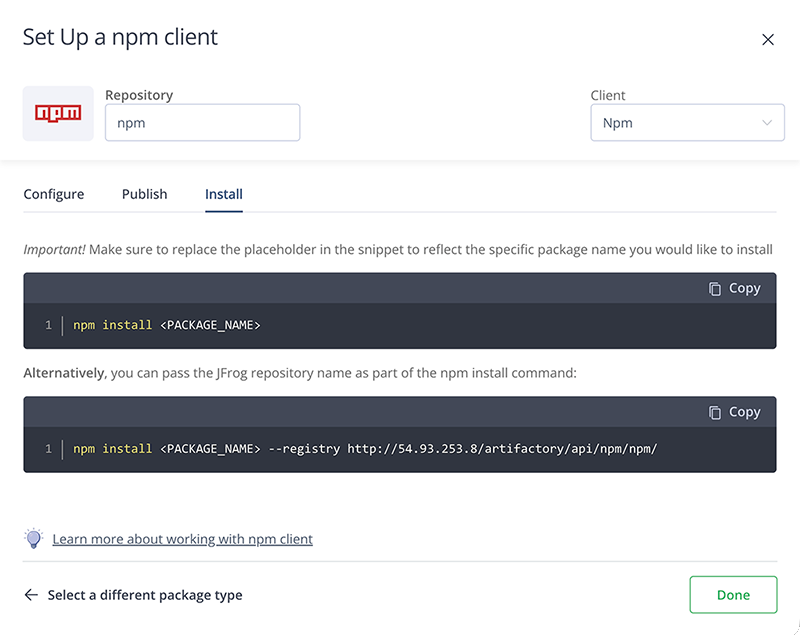

Here’s an example of how JFrog Artifactory documents client configuration for the NPM registry:

Artifactory performance and scalability issues

The versatility of the supported artifact types in JFrog Artifactory ensures that it can be used by many parts of the software supply chain:

- CI jobs can run in Docker images. These images can be hosted on Artifactory

- CI jobs can fetch language dependencies for which the packages are hosted on Artifactory

- CD runners deploy Helm charts that are hosted on Artifactory

- Helm deployments rely on Docker images that are hosted on Artifactory

- Individual developers pull in dependencies while developing software, which are hosted on Artifactory

- Infrastructure as code tools use scripts, packages and files that are hosted on Artifactory

These use cases put a lot of pressure on your Artifactory setup, and with the right traffic patterns on a large set of artifacts, performance degradation will take place.

While network pressure is a factor, the primary challenge with JFrog Artifactory is that it is database-bound. High concurrent request loads on a large variety of artifacts can overwhelm the database layer, causing performance degradation across your entire artifact delivery pipeline.

Adding a virtual registry

Rather than vertically scaling the underlying hardware, or adding Artifactory nodes to scale horizontally, you can offload that pressure by using a virtual registry.

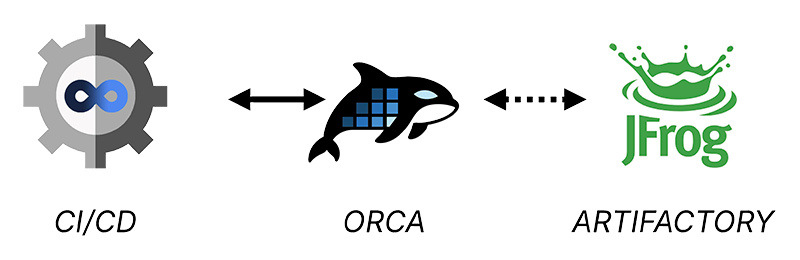

As the diagram below illustrates: Varnish Virtual Registry (Orca) can be added in front of JFrog Artifactory as a highly specialized reverse caching proxy.

Clients that fetch artifacts will fetch them directly from Orca instead of Artifactory. If an artifact is found in the cache, Orca will deliver it immediately, if not Orca fetches the requested artifact from Artifactory.

The Varnish Virtual Registry (Orca) is built on top of Varnish Enterprise and is optimized to handle artifacts in a protocol-native way:

- Orca detects artifact types based on client request headers and the URL

- Orca knows how to handle manifests for the different artifact types

- Orca is optimized to cache the underlying files and layers of the different artifact types

- Orca can enforce JFrog Artifactory’s access controls without cache duplication

Deploying Varnish Orca

Varnish Orca is easy to install and deploy:

- We offer an official Docker image

- You can orchestrate that Docker image using our official Helm chart

- Can install Orca on Linux servers using our DEB and RPM package.

Have a look at our install guide for more information.

A typical deployment pattern would be to install Orca close to your JFrog Artifactory setup, whether you are on a self-hosted Artifactory setup, or a SaaS deployment in the Cloud.

Besides offloading pressure from your Artifactory server, you can also offload the network, and reduce network latency by positioning Orca instances exactly where you need them.

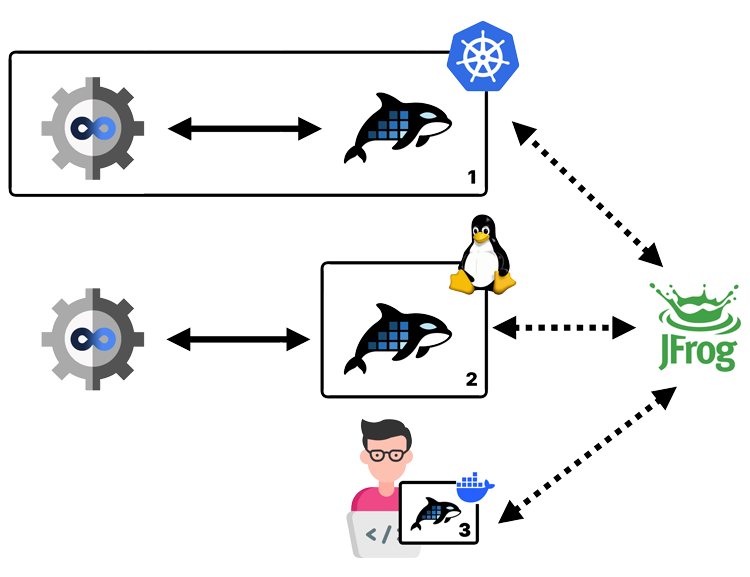

The diagram below shows a multi-site deployment where Orca instances are strategically positioned:

- There is an Orca instance deployed in the same Kubernetes cluster as the self-hosted CI runner

- There is on Orca installed on a Linux machine close to the centrally hosted CI/CD pipeline

- Orca can even run on a Docker container in a Docker container on a developer’s computer

The Orca deployments connect to the same Artifactory setup and offer the following benefits:

- They offload pressure from Artifactory, ensuring Artifactory performs at scale

- They offload the network and reduce network latency when fetching artifacts by being physically close to the consumer

- By offloading the network, you also reduce potential egress charges

Configuring Orca

Configuring Orca is straightforward and only requires a single config.yaml file.

The Orca configuration documentation is the reference for this config file. The install guide explains how to deploy that configuration file.

Here’s an example of an Orca config file that offers support for JFrog Artifactory:

varnish:

https:

- port: 443

acme:

email: user@domain.com

domains:

- artifactory.orca.example.com

ca_server: production

license:

file: /app/license.lic

virtual_registry:

registries:

- name: artifactory

default: true

remotes:

- url: https://artifactory.example.com

TLS configuration

This hypothetical config file is available on port 443 for HTTPS traffic. The TLS certificate for artifactory.orca.example.com is requested via LetsEncrypt.

Have a look at the TLS configuration tutorial for more information.

License configuration

Some of the premium features in Varnish Orca require a commercial license. The example config fetches that license from /app/license.lic on the disk.

Have a look at the custom license registration tutorial for more information.

Virtual Registry configuration

The example configuration only has a single virtual registry entry, which points to https://artifactory.example.com, which is the hypothetical hostname of your JFrog Artifactory service.

The default: true setting ensures that any request on the Orca server will end up reaching Artifactory, even if the hostname does not contain artifactory.*.

Have a look at the virtual registry configuration section of the documentation for more information.

Fetching your artifacts from Orca

Now that Varnish Orca is deployed and configured, you can fetch artifacts from it.

If you change the DNS record of your Artifactory endpoint to the IP address of your Orca setup, the process will be transparent. If that’s not the case, you need to reconfigure your artifact clients.

We have a list of client configuration tutorials that explains how to configure your client to fetch artifacts from Orca.

But here are a couple of common examples.

NPM example

To fetch NPM packages from Orca, simply add a --registry option that points to Orca:

npm install --registry=https://artifactory.example.com

Have a look at our NPM client configuration tutorial for more information.

Go example

Similarly, for Go you can set the GOPROXY environment variable to the endpoint of Orca:

GOPROXY=https://artifactory.example.com go mod tidy

Have a look at our Go client configuration tutorial for more information.

Docker example

And for Docker images, you could re-tag and push your images:

docker tag artifactory.example.com/docker/todo artifactory.orca.example.com/docker/todo

docker push artifactory.orca.example.com/docker/todo

This assumes you have an Artifactory registry for Docker named docker that is hosted on artifactory.example.com. The todo image is re-tagged to artifactory.orca.example.com/docker/todo and pushed to Artifactory via Orca on https://artifactory.orca.example.com.

You can then pull the image as follows:

docker pull artifactory.orca.example.com/docker/todo

You can also configure your Docker daemon to use Orca as a registry mirror, instead of re-tagging your images. We have a Kubernetes tutorial that explains how to re-configure containerd and use the Orca endpoint as a registry mirror.

View on GitHub

View on GitHub